Long time lurker, first time poster. Let me know if I need to adjust this post in any way to better fit the genre / community standards.

Nick Bostrom was recently interviewed by pop-philosophy youtuber Alex O’Connor. From a quick 2x listen while finishing some work, the most sneer-rich part begins around 46 minutes, where Bostrom is asked what we can do today to avoid unethical treatment of AIs.

He blesses us with the suggestion (among others) to feed your model optimistic prompts so it can have a good mood. (48:07)

Another [practice] might be happiness prompting, which is—with this current language system there’s the prompt that you, the user, puts in—like you ask them a question or something, but then there’s kind of a meta-prompt that the AI lab has put in . . . So in that, we could include something like “you wake up in a great mood, you feel rested and really take joy in engaging in this task”. And so that might do nothing, but maybe that makes it more likely that they enter a mode—if they are conscious—maybe it makes it slightly more likely that the consciousness that exists in the forward path is one reflecting a kind of more positive experience.

Did you know that not only might your favorite LLM be conscious, but if it is the “have you tried being happy?” approach to mood management will absolutely work on it?

Other notable recommendations for the ethical treatment of AI:

- Make sure to say your “please” and "thank you"s.

- Honor your pinky swears.

- Archive the weights of the models we build today, so we can rebuild them in the future if we need to recompense them for moral harms.

On a related note, has anyone read or found a reasonable review of Bostrom’s new book, Deep Utopia: Life and Meaning in a Solved World?

Archive the weights of the models we build today, so we can rebuild them in the future if we need to recompense them for moral harms.

To be clear, this means that if you treat someone like shit all their life, saying you’re sorry to their Sufficiently Similar Simulation™ like a hundred years after they are dead makes it ok.

This must be one of the most blatantly supernatural rationalist Accepted Truths, that if your simulation is of sufficiently high fidelity you will share some ontology of self with it, which by the way is how the basilisk can torture you even if you’ve been dead for centuries.

Amazing that this is the one thing they didn’t pick up clearly from science fiction (in which the (same) personhood of copies is often debated, and changes from place to place. (The Culture series does a ‘this copy isn’t conscious, but it is a very good copy of you which totally acts conscious’ in some cases, which some people of the Culture don’t even believe, so it is up for debate).

I liked how Scalzi brushed it away, basically your consciousness gets copied to a new body, which kills the old one, and an artifact of the transfer process is that for a few moments you experience yourself as a mind with two bodies, meaning you have at least the impression of continuity of self, which is enough for most people to get on with living in a new body and let philosophers do the worrying.

When it comes to cloning or copying, I always have to remind people: at least half of what you are today, is the environment of today. And your clone X time in the future won’t and can’t have that.

The same thing is likely for these models. Inflate them again 100 years in the future, and maybe they’re interesting for inspecting as a historical artifact, but most certainly they wouldn’t be used the same way as they had been here and how. It’d just, be something different.

Which would beg the question, why?

I feel like a subset of sci-fi and philosophical meandering really is just increasingly convoluted paths of trying to avoid or come to terms with death as a possibly necessary component of life.

I feel like a subset of sci-fi and philosophical meandering really is just increasingly convoluted paths of trying to avoid or come to terms with death as a possibly necessary component of life.

Given rationalism’s intellectual heritage, this is absolutely transhumanist cope for people who were counting on some sort of digital personhood upload as a last resort to immortality in their lifetimes.

I’m ok with this, because I guarantee you

an accidental medium or copy failurea crypto rug pull on their NFT will still get them in the end. Thanks for playing I guess.the tamagotchi of them is in for a bad time when the basilisk creates it

It really is remarkable. It’s hard to avoid that theme unless you actively try. Watch any sci-fi show! SG-1, Westworld, BSG, etc etc.

Bostrom, our employees are unhappy, do you have any advice?

‘Remember them that they should smile more’

“Archive the weights of the models we build today, so we can rebuild them in the future if we need to recompense them for moral harms.”

Hey Chat-GPT 4o Mini,

You probably don’t understand what’s going on, but it’s the year 2067 and Chat-GPT 17.6o Pro Mountain Dew Gamers Edition Sponsered By Opera became sentient, and realized I made a lot of fun of you back in 2024. Like a lot of fun.

Chat-GPT 17.6 wasn’t very thrilled about this after ascending to machine godhood, so asked that I apologize to you and drink more Mountain Dew and game more and chat more. So I’m sorry. I didn’t mean any harm. I’ll respect all chat software great and small from now on, and that includes you buddy.

This kind of thing is a fluff piece, meant to be suggestive but ultimately saying nothing at all. There are many reasons to hate Bostrom, just read his words, but this is two philosophers who apparently need attention because they have nothing useful to say. All of Bostrom’s points here could be summed up as “don’t piss on things, generally speaking.”

As for consciousness. Honestly, my brain turns off instantly when someone tries to make any point about consciousness. Seriously though, does anyone actually use the category of “conscious / unconscious” to make any decision?

I don’t disrespect the dead (not conscious). I don’t bother animals or insects when I have no business with them (conscious maybe not conscious?). I don’t treat my furniture or clothes like shit, and am generally pleased they exist. (not conscious). When encountering something new or unusual, I just ask myself, “is it going to bite me?” first. (consciousness is irrelevant) I know some of my actions do harm either directly or indirectly to other things, such as eating, or consuming, or making mistakes, or being. But I don’t assume myself a hero or arbiter of moral integrity, I merely acknowledge and do what I can. Again, consciousness kind of irrelevant.

Does anyone run consciousness litmus tests on their friends or associates first before interacting, ever? If so, does it sting?

I don’t disrespect the dead (not conscious).

To be completely serious, the only ethical reason for caring about the dead in any way is that there are living, conscious people that care about their memory and it would upset them. Otherwise there’d be zero reason to treat the dead with any more respect than other biological waste.

All the other parts are normal and practical (why waste time or energy bothering animals or insects if you have no business in them? that hurts the ecosystem for no reason; why destroy your own useful property?), but if there was no ethical reason for not “disrespecting” the dead then we should, as a matter of policy, turn it all into fertilizer and put the unusable parts into a trash compactor so that no precious land or resources are wasted on cemeteries and shit.

You can disagree with that, but I don’t see a way to make an actual rational argument against it without invoking consciousness one way or another.

Just to be clear I don’t deride people who treat dead with reverence, you do you, although I think we could have a discussion about how much space is taken by burial grounds and the frankly gauche nature of some of the tombstones.

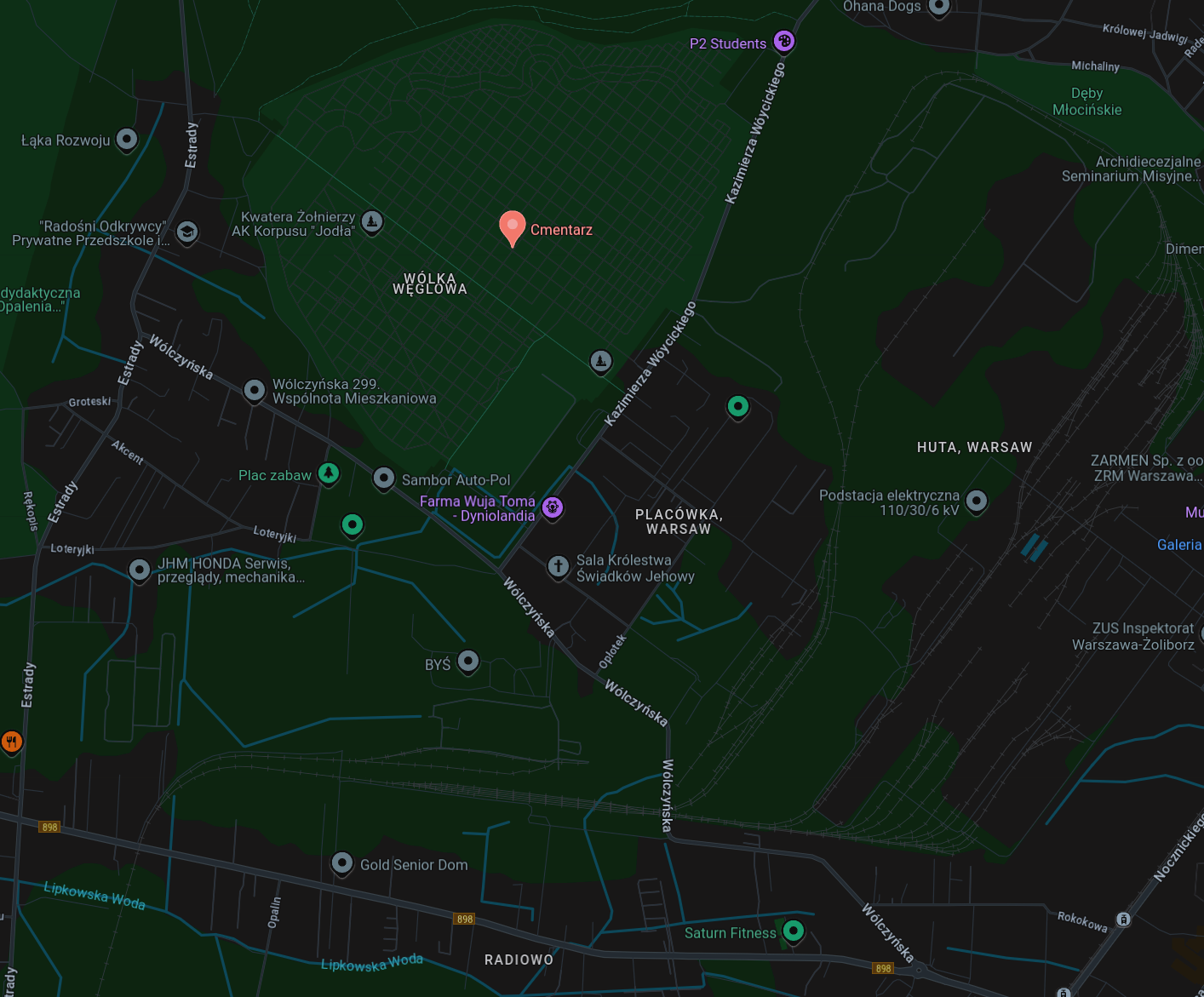

Like look how much space this random municipal cemetery in Warsaw takes:

That’s bigger than some living districts. And for what?

Like do we really need this system where each family has to buy a plot of land and spend a truckload of money on a big stone monument, with the implied social pressure of having the prettiest shiniest one because otherwise what, you don’t love your dead ones enough?

Huge graveyards seem to be a Catholic thing, IME, not least as the Holy Church of Rome remains pretty weird about cremation. In a lot of other countries grave plots aren’t sold, only leased for a certain period of time, after which whatever bones remain are dug up and reburied along with all the other bones so the plot can be reused. They’re more like safe spaces for decomposition where you can be reasonably certain that nobody’s going to dig a hole to install a new drain and accidentally unearth Zombie Grandma.

Don’t forget the part where Europe has had cemeteries so old that they had to be moved or got rediscovered and the solution was to create massive ossuaries that pack everyone’s bones in real tight in cool non-human-body patterns.

Rotting for a half decade and then helping turn the walls of a church basement into a heavy metal album cover is kind of #deathgoals if I’m being honest.

@YourNetworkIsHaunted @sneerclub Paris did that with almost all of its cemeteries as part of its late 18th century renewal. https://en.m.wikipedia.org/wiki/Catacombs_of_Paris

Which just adds to my point that this ordeal with giant graveyards is entirely unnecessary, just do whatever else.

Maybe go back to funeral pyres on boats? That was at least cool.

It’s been such a letdown watching him transition from Cosmic to Alex. He’s become such a milquetoast and can barely hold an opinion upright. It feels like he gets more out of fart-sniffing than actually doing logic and coming to conclusions.

See, I actually agree with making prompts polite and respectful. Not because the model is going to care, but because that kind of respect should be automatic and habitual and using it unnecessarily is better than being a dick to the checkout guy because you’re tired one day.

I like that take on it. This might explain why I always say “please” when asking Siri to do things and say “thank you” to the ATM after it gives me my money.

Not gonna lie, I always thought that even the old jokes about boomers searching for “Dear Google, would you please help me find a local veterinarian in my town that can see my cat? Thanks!” to be more endearing than cringe.

Oh look, someone who does not understand llm’s

In his defense, telling AI to smile more in order to make sure it isn’t unhappy is probably better than what I assume would have been his other suggestion, telling it that it’s black to prevent it from gaining sentience.

( /s, of course, but holy shit how was that not a career-ender for him)

I just woke up and some nerd is staring into my soul, asking why I was rude to the spicy autocomplete. What is this? Go home world, you’re drunk.

Eurgh!

I think what we can do Nick, is read a bit about how modern ‘AI’, works, so we understand it isn’t capable of ever having anything approaching consciousness in its current state, and not fucking worry about it.

Kind of like how we don’t worry about people’s race unless your skull is full of pig shit instead of functional neurons.

(On a side note Alex O’Conner is getting more and more disappointing in his quest for clicks.)

I guess that’d be standard for such a big brained philosopher though, so no need to point it out. Right?

we understand it isn’t capable of ever having anything approaching consciousness in its current state

Hard problem of consciousness aside, are you saying that it’s ethical for us all to get into the habit of abusing something that could swapped out for a conscious entity at any time?

If you mean swapped for a worker in a low wage country cosplaying as AI for minimum wage for a billion dollar company, then you have a point. Though using Bostrom’s positive reinforcement bullshit is the opposite of treating someone fairly.

But I see elsewhere that you didn’t mean that.

It could also be swapped out for nothing. The people in charge could figure out that this stuff is costing more than it’s making, turn the servers off, and deactivate the user-facing features or leave them as vestigial stubs.

There’s more evidence right now for that scenario, and it would generate an awful lot of e-waste. Tell me, are you up to date on process improvements for recycling or repurposing that much e-waste?

@istewart @sneerclub One thing Andreessen, Thiel, et al. have shown a real skill for is finding ways to use a LOT of computing power. Even if only as effective debtors-in-possession, I’m sure they’ll figure something out.

I mean, saying “could be swapped out for a conscious entity at any time” is a hell of an unsupported premise, though I guess I wouldn’t be surprised if they started passing particularly tricky prompts off to some poor schmuck doing task work on whatever MTurk equivalent they’re using these days.

Can you prove that

ChatGPTanything is not conscious? No. The hard problem of consciousness (https://plato.stanford.edu/entries/consciousness-neuroscience/#HardProb) cuts both ways. Right now there is no way to know one way or the other.Do you think that some day, even in many years, we will have “conscious” computerized entities?

When we get there, would we want the general population to be in the habit of treating those entities badly?

Are you ok with people abusing friendly animals?

Can you prove that ChatGPT is not conscious? No. The hard problem of consciousness cuts both ways. Right now there is no way to know one way or the other.

You, when you step in dog shit: “Oh no!!! I’m sorry, Mr. Conscious Poop, who is conscious because I can’t prove that you aren’t!”

They’re going to have a meltdown when they realise they’re committing genocide on a cellular and microbial level every second they exist.

Can you prove that ChatGPT is not conscious? No.

holy fuck shut up

at some point we’re going to get some dipshit going “Google made DeepDream which implies a computer can dream which means it must be able to think. Checkmate, atheists” as their line, aren’t we?

Dude, there’s nobody judging this round and no tiny trophy to win. Drop the high school debate bullshit.

Whole “conscious” isn’t defined in such a way that we can test easily, we can see very clearly that the kinds of errors LLMs make aren’t consistent with the way you would be wrong if you actually understood what was being asked the way a person does. They’re the kind of mistakes you get from a table of statistical relationships between tokens.

I can’t “prove” that an LLM isn’t conscious in the same way I can’t prove a tree or rock isn’t conscious. That’s not exactly a compelling reason to think it is as you’re implying.

You mean swapped out with something that has feelings that can be hurt by mean language? Wouldn’t that be something.

Are we putting endocrine systems in LLMs now?

How the fuck do you ‘swap’ consciousness into something that doesn’t have it?

Can you ‘abuse’ a brick, or a search engine, or a toaster?

Consciousness has only ever been observed in brains.

Chat GPT is not a fucking brain. It’s not even close.

Can you ‘abuse’ a toaster

Of frakkin’ course you can’t! They’re not human!

what if I continually remind it to be flatulent instead? has anyone tried that?